Space exploration

This is very important moment as we observe austranauts going back to the Moon. Let's

reflect on this a little bit. The above picture is my favourite from this mission,

really gives the perspective that our little world is quite lonely in the darkness of the space.

More pictures in the flyby galery by Nasa.

In December 1968, Appolo 8 was the first similar mission, go to the Moon, cicrle around it and came back.

More pricisely Appolo 8 actually spent 20 hours on the Moon orbit making 10 revolutions around the Moon. This was to make

a decision about where to land the Moon on the upcoming missions. Unlike Appolo 8 Artemis II mission

didn't stay on the orbit, they were mostly testing the Orion capsule life support systems and used

"free-return-trajectory", meaning that Orion didn't need to burn any fuel on the dark side of the Moon

to come back home. Primary objectives didn't require them to stay, so they just went straight back home

after the first turn.

Instead of Appolo 8 we should actually compare Artemis II to Appolo 7 mission which

was the first mission to reach space in Appolo series. They had similar objectives:

test the spacecraft's life support systems and conduct live stream to the world from

space. This time Artemis II took 10,000 pictures and name some of the far side Moon craters.

The live streams were really great, they were brutally honest and transparent.

Live coverage

It was shoking to watch the whole honesty of the comms during the flight. They were discussing live many

issues with the flight operations: emails probelmd of the first day, toilet and windows.

This was very interesting from the engineering point and geve us a glimps on how complex

in really even such a sound simple fly-by around the Moon.

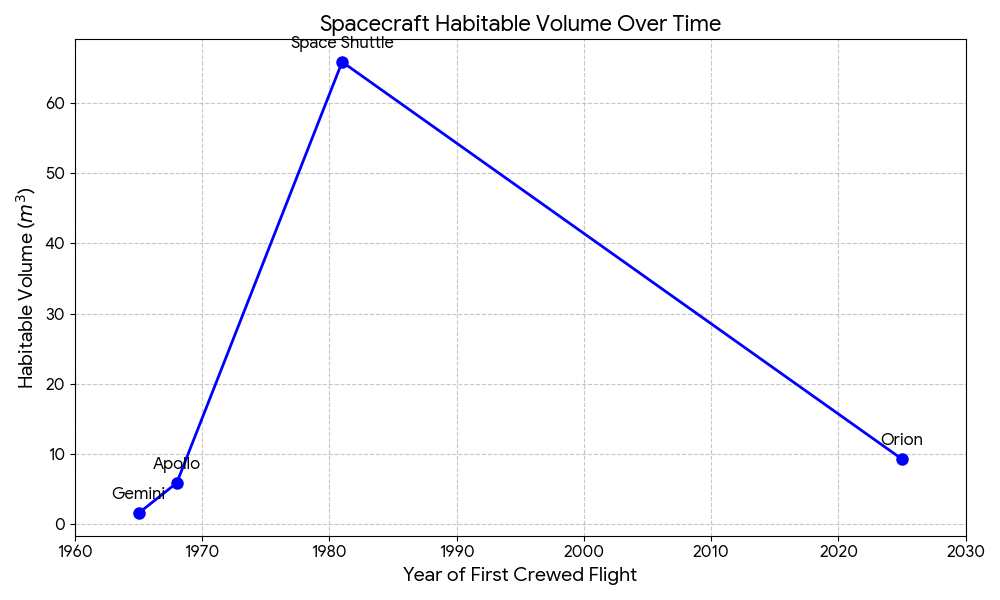

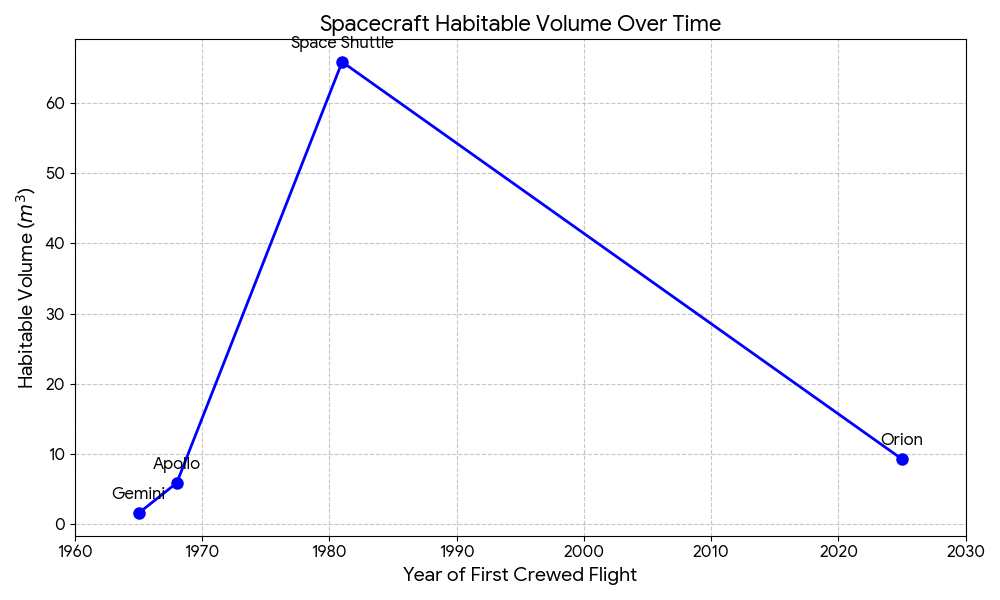

Lack of phase change

With all this great achivement it feels like there is not phase change in this technology in 58 years.

In fact we seems to go back in time.

Here is the comparison

| Feature |

Gemini |

Apollo |

Space Shuttle |

Orion |

| First Crewed Flight |

1965 |

1968 |

1981 |

2025 (Planned) |

| Crew Capacity |

2 |

3 |

7 (typical) |

4 |

| Habitable Volume |

~55 cu ft (1.6 m³) |

~210 cu ft (5.9 m³) |

~2,325 cu ft (65.8 m³) |

~330 cu ft (9.3 m³) |

| Vol. per Person |

~27.5 cu ft |

~70 cu ft |

~332 cu ft |

~82.5 cu ft |

| "Feel" |

VW Beetle |

Walk-in closet |

Two-story condo |

Large Van |

Looks like we got this very rear phenominon where technology actually went back in phase change with time.

Orion still gives a feel of the first generation space ship.

I hope to see more like a sinus wave where we come back to proper space ships later in the time.

Space Shuttle program was closed due to high cost of maitenence and space craft aging. Interestingly the

cost of repeated launch of a space shuttle was about $0.5-1B, while Artemis cost is around $2-4B.

Shortest time they could fly again was 54 days, maybe it was not that bad at the end.

The mission message

I watched the first speaches by the austronauts back on Earth. You can tell they came back enlighted, different,

better people. I think Christina Koch's message is the strogest.

She said she thought this forward when they were on the orbit about to land, which demonstrates her forward looking mindset.

Her message: We all here together, planet Earch crew, let's treat each other well!

Powerful message and I agree with that.

April 2026

Technology phases

Evolution of human designed technologies follows same "laws" like biological laws of evolution.

One of this laws: tecnology phase changes - fundamental change in the underlaying technology which makes

technological products drastically better. Sometimes it is called "swap", when immediately new waw

swaps the old way of doing things, and sometimes phases co-exist for sometime.

Examples

Photography

Most common example is the digital photography. In the middle of 20s century photography was in

hands of professional photographes who could make a photo. They would take a picture with

the camera, take it from the photographic film

to a paper, and then sell it with the frame to the end customers. The process was long, full of

tricky moment and hard to implement for common population even with modern tools.

Digital photography and Internet made it possible to create a photo and publish it

reaching sometimes millions of people within seconds. This drammatically improve the time

efficiency of the process, but these two technologies still coexist.

Bycicles

My faivourite example is a bycicle. First version was created in 1813 and didn't have

pedals. Users needed to push themselves from the ground and that made them move. Despite

not very convinient propulsion system, bycicles became very quickly very popular, it was

simply big fun to ride them on the streets. Later on people

added huge wheel to the bicycle, because small when was too hard to peddle. And only later we

got the pedals, chain and the steering wheel which made bicycle very convenient to steer for a long time and

shaped it's phase to the one we know these days.

Recently, pepople added an electric engines to bycles and made go even further. There is even a discussion

that electric bycicles made longer distances for people much more accessible, you don't need to be

very fit to ride for 30km anymore, which made people use bycicles even more. In 2020 number of people

commiting using bicycles to work increased by +21%.

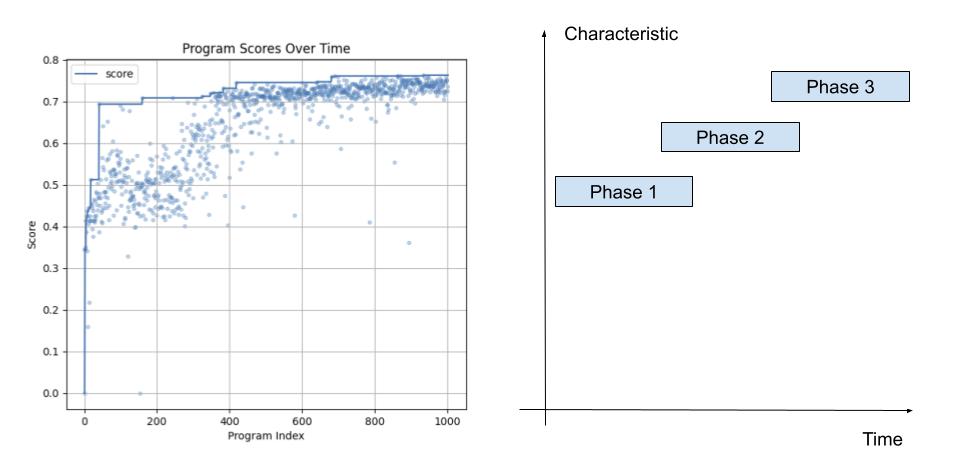

Code evolution

As we conduct experiments with code evolution we could definately observe phase change in the

evolved programs regardless of the nature of the problem we work on. It is truly fascinating

how artificial evolution leads us to observe phase changes in the found programs.

As program discovery process follows - it explores new features of the program that lead

to the better scores and rarely drops them unless more efficient or robust features are found.

March 2026

How Nanoclaw makes you think?

What is NanoClaw?

Created by Gavriel Cohen as a secure, lightweight alternative to the massive OpenClaw framework, NanoClaw is sparking a lot of philosophical and technical debates right now. I personally perfer to have a simple yet well understood and containerised bot to have bigger controls over the risks. Underneath it still uses Claude Code (including skills) for short, medium coding tasks, but generally can be a computer assistant of any kind for you or a team.

Here are three key reasons why this project is so intersting and seems useful:

Radical Minimalism and "Disposable" Code

Unlike OpenClaw’s bloated 400,000-line architecture, NanoClaw strips everything down to just a few thousand lines of code. This is a massive paradigm shift: the codebase is intentionally small enough to fit entirely inside a modern AI’s context window. But most importantly can be understood by humans. It makes you realize that in an era where AI can instantly understand and rewrite entire systems, code is becoming cheap and disposable. We no longer need to write code to last for a decade; we just need it to be simple enough for an AI to rewrite tomorrow. While expanding the functionality of the bot you

also start to think on how to make it more compact yet simple.

True Security Through OS-Level Isolation

Giving an AI agent control over your computer is inherently risky. While older frameworks rely on flimsy application-level allowlists (running everything in a shared process), NanoClaw forces every single agent into its own isolated sandbox (using Linux Docker or Apple Containers). It highlights a critical realization for the future of AI: you shouldn't have to defend yourself against your own personal assistant.

The Shift from Plugins to "Skills"

Instead of building a complex, rigid plugin architecture, NanoClaw relies on direct AI modification. If you want to add a feature (like Telegram integration), you don't install a pre-packaged plugin. Instead, you use a "skill"—essentially a Markdown file with instructions that tells a coding agent exactly how to modify your local codebase to build that feature. It blurs the line between "user" and "developer."

How work with Nanoclaw makes you think?

Plan for the agent to change according to your future/current needs first.

When you have a project at hand you should stop, think, design and plan it carefully. As your “minion” Nanoclaw is going to help you with this project only if you give it the right tools and skills. So this makes you think ahead about what kind of skills you need to help you most efficiently in the agent itself. We found it is very useful for the nanoclaw to operate images, see images from your communication channel and send you images (for example, rendered web site pages) back to you.

Plan ahead your next five steps.

Now the planning step becomes extremely important as we can delegate all S and M size tasks to the agent. So we better have a good sequential plan with some parts implementation taken into a parallel execution. Typically this means that we have a proper design document that describes the protocol of system interactions and the rest is written in accordance with it.

Delegate intelligently

Recent google paper goes into depth on intelligent delegation which can improve your

delegation efficiency when dealing with people or bots. You need to think how to delegate the set of tasks so that it minimises your time.

Move Beyond Simple Task Decomposition to Include Accountability: Intelligent delegation is more than just breaking down a complex problem into smaller sub-tasks (which current systems often do using simple heuristics). It necessitates a structured framework that explicitly incorporates the transfer of authority, responsibility, and accountability. Delegators must provide clear specifications regarding roles and boundaries to ensure that outcomes can be properly managed and audited.

Implement Adaptive and Robust Mechanisms: Real-world environments are unpredictable, and early AI deployment methods tend to be ad hoc and brittle. To delegate intelligently, systems must be dynamically adaptive. This means incorporating continuous performance monitoring, adjusting based on real-time feedback, and featuring robust fault-tolerant designs (like failovers and re-delegation) to safely handle unexpected errors or environmental changes.

Center on Risk Assessment and Trust: Delegation inherently involves risk. An intelligent delegation framework requires accurate capability matching (knowing exactly who or what to assign a task to) and mechanisms for establishing trust between the delegator and the delegatee—whether they are humans or other AI agents. Trust moderates the risk and ensures that distributed tasks are successfully completed under specific constraints without compromising safety.

Our mix of skills

- Telegram (due to avalibility of the client for the linux)

- Send and receive pictures, which is critical for our workflow.

- Integration with Linear.app for task management.

- Integration with github for source control management.

I really like to keep it minimalistic for now and work with just a few key projects with the bot.

February 2026

AlphaEvovle as new type of scaling

Despite large investments and development in AI recently there are talks about the scaling wall with the narrative

that key AI Labs are hitting the scaling law wall. People might receive it as stop of the progress in AI or another

AI winter is coming soon. Let's talk of scaling types we see on the market:

- Larger bigger models pre-trained with exponentially more data. This is the typical scaling mode

most people are talking about. As Internet data is exhausted increasing pre-training dataset exponentially

seems to take an exponential amount of effort and we will not see this type of progress upcoming soon.

- Thinking longer. Taking more time to split a task into smaller steps with reasoning. Previous generation

reasoning models would produce a lot of tokens, so now there is a race backwards to produce less but more

"sound" tokens. Still this is one of the current methods for improving LLM scores without extra data.

- RL post-training. Despite large success of RL post training there is a large discussion on effectiveness

of that method and how we should effectively train LLMs on easily verifiable tasks. Yet currently this is

go to methods for model training and can help increase post-training data for LLMs.

Together with data mixing and weighting schemas this is what is used for post training.

All of these are great topics of discussion, however we also observe recent way of scaling mode that is related

to iterative solving. It is sub-type of point number #2 from the above but it uses the ability of LLM to work on the problem iteratively and utilising the input/output tokens skew. Currently most models can have up to 1M input tokens, but only 64K output tokens.

AlphaZero momentum in AI

We yet to see the AlphaZero/Mu moment in AI. There are attempts to organize AI self-play and get better training data

out of this process but so far all self-evolving agents lacked progressing complexity of the tasks. However we

do not yet see those to reach the AlphaMu performance on a significant number of real world tasks.

For Minecraft operator there was a work propose in the Voyager paper in that direction, however minecraft is not a real

life and it has designed progression of achievements so that humans can feel the improvement moving from

wooden to steel pickaxe.

Another way of scaling - AlphaEvolve

A new emerging way of scaling AI is using 1000x repetition at the task where problem can be expressed as

a Python program. Here we ask LLM to change the initial code providing only diffs(which helps to deal with the limited output context.) AlphaEvolve is a method of long thinking by exploring and scoring LLM solutions at scale.

The AlphaEvolve paper has been out for a year now and we observe numerous open source clones and large interests from the community.

These projects replicate the "Evolutionary LLM" loop—where an LLM proposes code changes, an evaluator tests them, and only the "fittest" code survives to the next generation.

- OpenEvolve (by Asankhaya Sharma): The lead candidate, specifically designed as an open-source implementation of the AlphaEvolve system. Key features include distributed evolutionary algorithms, multi-language support, and the ability to evolve entire codebases rather than single functions.

- OpenAlpha_Evolve: The community alternative. A highly active community fork/re-implementation that focuses on extensibility, including adding support for local LLMs like Ollama and LMStudio.

- ShinkaEvolve: Done by SakanaAI.

- CodeEvolve: A sibling project to OpenEvolve, focusing specifically on agentic code optimization and "hall-of-fame" storage for the best discovered algorithms.

The work has already been cited 350 times and people are working on some extensions.

Future work

I think we just scratching the surface with this type of scaling, however we as humanity already tried a few things.

There is a work that has been done on applying AlphaEvolve for math frontiers (arXiv:2511.02864). In this paper they explored 62 math problems and found optimum for some of them.

In this work, they tried to apply deep think before the AlphaEvolve to explore various approaches for the problem solving.

Fundamentally AlphaEvolve is a first touch on overlapping LLM prior knowledge on coding with Genetic algorithms and I believe we are going to see further work in this area. Not just by making this scaling framework available to scientists, but also by improving the speed of exploring new frontiers and improving the diversity of the solutions.

January 2026

Verification limits

Recently there were a few good articles on Verifier Rule

which points out logically that some tasks are easier to verify than solve and some are the opposite. So AI is aiming

at solving through RL post-training most of the verifiable tasks soon leave disadvantaged verifier asymetrical tasks

to be automated as a long tail long term research.

RL here just a method of getting verification signal back to model weights. We ask model to try solving a task

many times and when verifier is happy we update the weights according to the reward signal.

Tasks that are easier to verify than solve

-

Sudoku and Logic Puzzles: As mentioned in the article, solving a Sudoku puzzle requires navigating a large tree of possibilities and constraints. However, once the grid is filled, verifying the solution takes mere seconds—you simply check if every row, column, and box contains digits 1–9.

-

Software Engineering: Writing the code to build a complex platform (like Instagram) takes teams of engineers years of development. In contrast, verifying that the "solution" works can be done by a layperson in seconds simply by opening the app and seeing if the feed loads.

-

Cryptographic Hashing (Password Cracking): In computer security, finding a password that matches a specific "hash" is computationally expensive (often impossible without brute force). However, if someone provides a candidate password, the system can verify it instantly by running the hash function once.

-

Math Competition Problems: Solving a complex geometry or algebra problem might take hours of creative thinking and derivation. However, if you are given a proposed final answer (and potentially the steps), plugging the numbers back into the original equation to see if they hold true is often much faster.

-

Lock Picking vs. Key Usage: Physically, "solving" a lock without a key is a difficult skill requiring time and manipulation. "Verifying" the solution (using the correct key) is instantaneous—the lock either turns or it doesn't.

Tasks that are easier to solve than verify

-

Generative Text / Essays (Brandolini’s Law): It is very fast to write a convincing-sounding essay or blog post filled with statistics. It takes an order of magnitude more time for a human to verify that every fact, citation, and figure in that essay is actually correct.

-

Scientific Hypotheses: it is incredibly easy to propose a new diet (e.g., "Eating only blueberries improves memory"). It takes years of rigorous clinical trials, control groups, and data analysis to verify whether that hypothesis is scientifically true.

-

Code Security: A developer can write a "solution" to a coding problem in minutes that compiles and runs. However, verifying that the code is completely secure and free of vulnerabilities (like memory leaks or edge-case bugs) is much harder and often technically non-trivial.

-

Legal Accusations: In a courtroom setting, it is often easier to invent a narrative or "theory of the crime" (the solution) than it is to verify it through the collection of forensic evidence, witness testimony, and cross-examination.

-

Predictions: It is easy to generate a prediction (e.g., "This stock will double in value by next year"). Verifying this solution is impossible in the present; it requires waiting for the passage of time to see if the prediction materializes.

This has an interesting implication on the industries that are going to be automated by AI. If industry product

verification cycle is long and requires manual labour, than the industry will be automated when their verification asymetry

is either reduced or simulated well.

Industries to automate much later

-

Pharmaceuticals (Drug Discovery): A chemist can design a new molecular compound (the solution) in a day. However, verifying that the drug is safe and effective for humans requires a decade of clinical trials costing billions of dollars. Although there are labs like

Isomorphic Lab that are aiming at increasing the speed of the process.

-

Civil Engineering (Infrastructure): Pouring a concrete bridge deck is a straightforward solution. Verifying the structural integrity and long-term fatigue resistance of that bridge over a 50-year lifespan is a massive undertaking involving sensors and periodic inspections.

-

Aerospace (Component Manufacturing): Manufacturing a single turbine blade for a jet engine is automated and fast. Verifying that the blade has zero microscopic fissures—which could cause a catastrophic failure mid-flight—requires expensive X-ray and ultrasonic testing.

-

Environmental Policy (Carbon Offsets): A company can easily claim to be "carbon neutral" by purchasing offsets. Verifying that those trees were actually planted, are still alive, and wouldn't have been planted anyway (additionality) is an ongoing global monitoring challenge.

-

Deep-Sea and Space Exploration: Proposing a mission or a landing site is a matter of calculation. Verifying the actual conditions of that environment (e.g., checking for life under the ice of Europa) is exponentially more difficult than the theoretical plan.

-

Food Safety (Supply Chain): A factory can produce thousands of jars of peanut butter daily. Verifying that every single jar is free of Salmonella or heavy metals requires complex sampling and lab work that lags far behind production speed.

-

Academic Peer Review: A researcher can write a paper in a few months. Verifying that the data isn't fraudulent and that the experiments are replicable often takes the scientific community years of follow-up study, especially for modern Physics.

So it seems like we need some foundational work in these industries to enable the progress there quicker.

How can we enable easier/quicker verification for these industries?

December 2025

AI x Robotics in Austin, TX

According to Statista current robotics market is $50B and it is going to grow to $220B by 2030

source. Essentially 4x growth is expected in 5 years, not too bad.

To check the reality I visited the AI x Robotics Symposium in Austin Texas which was kindly organized by UT Austin and Austin Robotics. A few highlights from the symposium:

-

Austin Robotics lab is fascinating with 20+ different robots that they work with. They have a huge area with many different types of robots there. Very impressive workshop with modern 3d printers, high power laser cutters and FMT and resin 3d printers.

-

Academia is quite pessimistic about the robotics market in the next 3-5 years. They say there are a lot of missing bits and pieces in robotics and they do not expect quick adoption due to these significant gaps in the technology. Keynote speakers insisted on slow uptake in the robotics market despite their deep involvement with robotic startups.

-

There were quite a few entrepreneurs in the symposium including those who build (have been creating)humanoid robots. Some presented their humanoids on the stage and

demonstrated their robots which still have quite limited capabilities.

-

Humanoid as a form factor is quite questionable. From one hand, we have all the things built for human hands and human height in house holds, from another, hands are quite hard to program and control.

Robotisists are consistent in their opinion on slow adoption, and here I listed the key gaps that prevent quick robots adoptions:

- Lack of robust hand-inspired manipulators. We know this worked, when we see jungling robot which uses human-inspired fingers to throw and catch items.

- Slow deployment rate for any robotic applications. We know it worked, when we see robots freely used among humans.

- Robotic motors are still quite expensive, even though some robotic startups claim the reduction in cost in the recent years.

- Lack of coherent safety framework for humanoid and domestic robots.

- Lack of spatial awareness and spatial reasoning in modern LLMs, which we assume will be used for robot brains.

So despite high hype around robots it seems like we are going to see slow adoption especially in our house holds in the upcoming 5-10 years.

March 2025

First blog post

I am very excited to make my first blog post. AI world is changing very rapidly,

it changes the way we interact with information creating enormous opportunities for

creators and inventive minds. Here I am going to share some of the recent news, my thoughts and ideas in this space.

February 2025